Services¶

Configuring How to Reach the Services

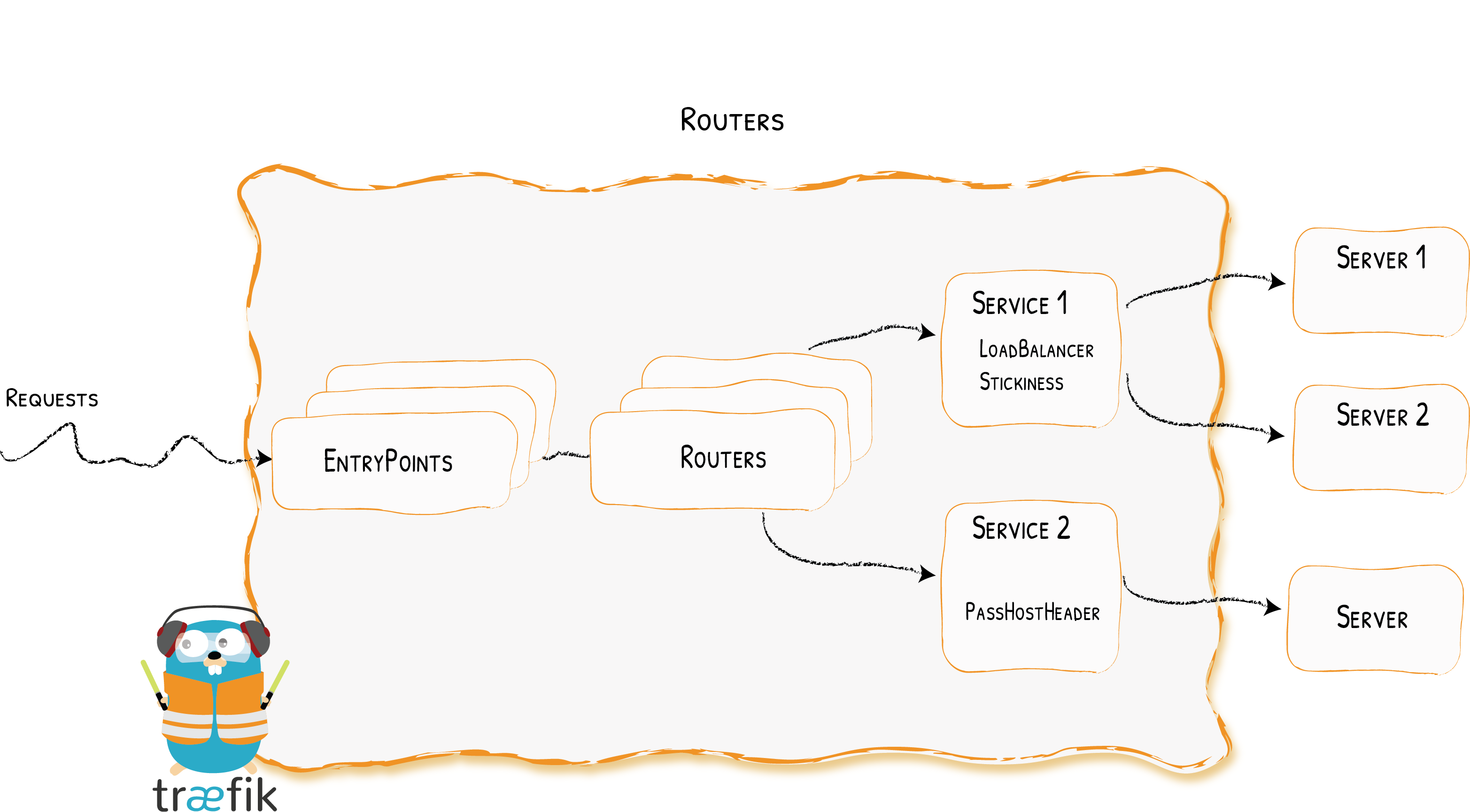

The Services are responsible for configuring how to reach the actual services that will eventually handle the incoming requests.

Configuration Examples¶

Declaring an HTTP Service with Two Servers -- Using the File Provider

## Dynamic configuration

http:

services:

my-service:

loadBalancer:

servers:

- url: "http://<private-ip-server-1>:<private-port-server-1>/"

- url: "http://<private-ip-server-2>:<private-port-server-2>/"## Dynamic configuration

[http.services]

[http.services.my-service.loadBalancer]

[[http.services.my-service.loadBalancer.servers]]

url = "http://<private-ip-server-1>:<private-port-server-1>/"

[[http.services.my-service.loadBalancer.servers]]

url = "http://<private-ip-server-2>:<private-port-server-2>/"Declaring a TCP Service with Two Servers -- Using the File Provider

tcp:

services:

my-service:

loadBalancer:

servers:

- address: "<private-ip-server-1>:<private-port-server-1>"

- address: "<private-ip-server-2>:<private-port-server-2>"## Dynamic configuration

[tcp.services]

[tcp.services.my-service.loadBalancer]

[[tcp.services.my-service.loadBalancer.servers]]

address = "<private-ip-server-1>:<private-port-server-1>"

[[tcp.services.my-service.loadBalancer.servers]]

address = "<private-ip-server-2>:<private-port-server-2>"Declaring a UDP Service with Two Servers -- Using the File Provider

udp:

services:

my-service:

loadBalancer:

servers:

- address: "<private-ip-server-1>:<private-port-server-1>"

- address: "<private-ip-server-2>:<private-port-server-2>"## Dynamic configuration

[udp.services]

[udp.services.my-service.loadBalancer]

[[udp.services.my-service.loadBalancer.servers]]

address = "<private-ip-server-1>:<private-port-server-1>"

[[udp.services.my-service.loadBalancer.servers]]

address = "<private-ip-server-2>:<private-port-server-2>"Configuring HTTP Services¶

Servers Load Balancer¶

The load balancers are able to load balance the requests between multiple instances of your programs.

Each service has a load-balancer, even if there is only one server to forward traffic to.

Declaring a Service with Two Servers (with Load Balancing) -- Using the File Provider

http:

services:

my-service:

loadBalancer:

servers:

- url: "http://private-ip-server-1/"

- url: "http://private-ip-server-2/"## Dynamic configuration

[http.services]

[http.services.my-service.loadBalancer]

[[http.services.my-service.loadBalancer.servers]]

url = "http://private-ip-server-1/"

[[http.services.my-service.loadBalancer.servers]]

url = "http://private-ip-server-2/"Servers¶

Servers declare a single instance of your program.

The url option point to a specific instance.

Paths in the servers' url have no effect.

If you want the requests to be sent to a specific path on your servers,

configure your routers to use a corresponding middleware (e.g. the AddPrefix or ReplacePath) middlewares.

A Service with One Server -- Using the File Provider

## Dynamic configuration

http:

services:

my-service:

loadBalancer:

servers:

- url: "http://private-ip-server-1/"## Dynamic configuration

[http.services]

[http.services.my-service.loadBalancer]

[[http.services.my-service.loadBalancer.servers]]

url = "http://private-ip-server-1/"Load-balancing¶

For now, only round robin load balancing is supported:

Load Balancing -- Using the File Provider

## Dynamic configuration

http:

services:

my-service:

loadBalancer:

servers:

- url: "http://private-ip-server-1/"

- url: "http://private-ip-server-2/"## Dynamic configuration

[http.services]

[http.services.my-service.loadBalancer]

[[http.services.my-service.loadBalancer.servers]]

url = "http://private-ip-server-1/"

[[http.services.my-service.loadBalancer.servers]]

url = "http://private-ip-server-2/"Sticky sessions¶

When sticky sessions are enabled, a cookie is set on the initial request and response to let the client know which server handles the first response. On subsequent requests, to keep the session alive with the same server, the client should resend the same cookie.

Stickiness on multiple levels

When chaining or mixing load-balancers (e.g. a load-balancer of servers is one of the "children" of a load-balancer of services), for stickiness to work all the way, the option needs to be specified at all required levels. Which means the client needs to send a cookie with as many key/value pairs as there are sticky levels.

Stickiness & Unhealthy Servers

If the server specified in the cookie becomes unhealthy, the request will be forwarded to a new server (and the cookie will keep track of the new server).

Cookie Name

The default cookie name is an abbreviation of a sha1 (ex: _1d52e).

Secure & HTTPOnly & SameSite flags

By default, the affinity cookie is created without those flags. One however can change that through configuration.

SameSite can be none, lax, strict or empty.

Adding Stickiness -- Using the File Provider

## Dynamic configuration

http:

services:

my-service:

loadBalancer:

sticky:

cookie: {}## Dynamic configuration

[http.services]

[http.services.my-service]

[http.services.my-service.loadBalancer.sticky.cookie]Adding Stickiness with custom Options -- Using the File Provider

## Dynamic configuration

http:

services:

my-service:

loadBalancer:

sticky:

cookie:

name: my_sticky_cookie_name

secure: true

httpOnly: true## Dynamic configuration

[http.services]

[http.services.my-service]

[http.services.my-service.loadBalancer.sticky.cookie]

name = "my_sticky_cookie_name"

secure = true

httpOnly = true

sameSite = "none"Setting Stickiness on all the required levels -- Using the File Provider

## Dynamic configuration

http:

services:

wrr1:

weighted:

sticky:

cookie:

name: lvl1

services:

- name: whoami1

weight: 1

- name: whoami2

weight: 1

whoami1:

loadBalancer:

sticky:

cookie:

name: lvl2

servers:

- url: http://127.0.0.1:8081

- url: http://127.0.0.1:8082

whoami2:

loadBalancer:

sticky:

cookie:

name: lvl2

servers:

- url: http://127.0.0.1:8083

- url: http://127.0.0.1:8084## Dynamic configuration

[http.services]

[http.services.wrr1]

[http.services.wrr1.weighted.sticky.cookie]

name = "lvl1"

[[http.services.wrr1.weighted.services]]

name = "whoami1"

weight = 1

[[http.services.wrr1.weighted.services]]

name = "whoami2"

weight = 1

[http.services.whoami1]

[http.services.whoami1.loadBalancer]

[http.services.whoami1.loadBalancer.sticky.cookie]

name = "lvl2"

[[http.services.whoami1.loadBalancer.servers]]

url = "http://127.0.0.1:8081"

[[http.services.whoami1.loadBalancer.servers]]

url = "http://127.0.0.1:8082"

[http.services.whoami2]

[http.services.whoami2.loadBalancer]

[http.services.whoami2.loadBalancer.sticky.cookie]

name = "lvl2"

[[http.services.whoami2.loadBalancer.servers]]

url = "http://127.0.0.1:8083"

[[http.services.whoami2.loadBalancer.servers]]

url = "http://127.0.0.1:8084"To keep a session open with the same server, the client would then need to specify the two levels within the cookie for each request, e.g. with curl:

curl -b "lvl1=whoami1; lvl2=http://127.0.0.1:8081" http://localhost:8000Health Check¶

Configure health check to remove unhealthy servers from the load balancing rotation.

Traefik will consider your servers healthy as long as they return status codes between 2XX and 3XX to the health check requests (carried out every interval).

Below are the available options for the health check mechanism:

pathis appended to the server URL to set the health check endpoint.scheme, if defined, will replace the server URLschemefor the health check endpointhostname, if defined, will applyHostheaderhostnameto the health check request.port, if defined, will replace the server URLportfor the health check endpoint.intervaldefines the frequency of the health check calls.timeoutdefines the maximum duration Traefik will wait for a health check request before considering the server failed (unhealthy).headersdefines custom headers to be sent to the health check endpoint.followRedirectsdefines whether redirects should be followed during the health check calls (default: true).

Interval & Timeout Format

Interval and timeout are to be given in a format understood by time.ParseDuration. The interval must be greater than the timeout. If configuration doesn't reflect this, the interval will be set to timeout + 1 second.

Recovering Servers

Traefik keeps monitoring the health of unhealthy servers.

If a server has recovered (returning 2xx -> 3xx responses again), it will be added back to the load balancer rotation pool.

Health check in Kubernetes

The Traefik health check is not available for kubernetesCRD and kubernetesIngress providers because Kubernetes

already has a health check mechanism.

Unhealthy pods will be removed by kubernetes. (cf liveness documentation)

Custom Interval & Timeout -- Using the File Provider

## Dynamic configuration

http:

services:

Service-1:

loadBalancer:

healthCheck:

path: /health

interval: "10s"

timeout: "3s"## Dynamic configuration

[http.services]

[http.services.Service-1]

[http.services.Service-1.loadBalancer.healthCheck]

path = "/health"

interval = "10s"

timeout = "3s"Custom Port -- Using the File Provider

## Dynamic configuration

http:

services:

Service-1:

loadBalancer:

healthCheck:

path: /health

port: 8080## Dynamic configuration

[http.services]

[http.services.Service-1]

[http.services.Service-1.loadBalancer.healthCheck]

path = "/health"

port = 8080Custom Scheme -- Using the File Provider

## Dynamic configuration

http:

services:

Service-1:

loadBalancer:

healthCheck:

path: /health

scheme: http## Dynamic configuration

[http.services]

[http.services.Service-1]

[http.services.Service-1.loadBalancer.healthCheck]

path = "/health"

scheme = "http"Additional HTTP Headers -- Using the File Provider

## Dynamic configuration

http:

services:

Service-1:

loadBalancer:

healthCheck:

path: /health

headers:

My-Custom-Header: foo

My-Header: bar## Dynamic configuration

[http.services]

[http.services.Service-1]

[http.services.Service-1.loadBalancer.healthCheck]

path = "/health"

[http.services.Service-1.loadBalancer.healthCheck.headers]

My-Custom-Header = "foo"

My-Header = "bar"Pass Host Header¶

The passHostHeader allows to forward client Host header to server.

By default, passHostHeader is true.

Don't forward the host header -- Using the File Provider

## Dynamic configuration

http:

services:

Service01:

loadBalancer:

passHostHeader: false## Dynamic configuration

[http.services]

[http.services.Service01]

[http.services.Service01.loadBalancer]

passHostHeader = falseServersTransport¶

serversTransport allows to reference a ServersTransport configuration for the communication between Traefik and your servers.

Specify a transport -- Using the File Provider

## Dynamic configuration

http:

services:

Service01:

loadBalancer:

serversTransport: mytransport## Dynamic configuration

[http.services]

[http.services.Service01]

[http.services.Service01.loadBalancer]

serversTransport = "mytransport"Info

If no serversTransport is specified, the default@internal will be used.

The default@internal serversTransport is created from the static configuration.

Response Forwarding¶

This section is about configuring how Traefik forwards the response from the backend server to the client.

Below are the available options for the Response Forwarding mechanism:

FlushIntervalspecifies the interval in between flushes to the client while copying the response body. It is a duration in milliseconds, defaulting to 100. A negative value means to flush immediately after each write to the client. The FlushInterval is ignored when ReverseProxy recognizes a response as a streaming response; for such responses, writes are flushed to the client immediately.

Using a custom FlushInterval -- Using the File Provider

## Dynamic configuration

http:

services:

Service-1:

loadBalancer:

responseForwarding:

flushInterval: 1s## Dynamic configuration

[http.services]

[http.services.Service-1]

[http.services.Service-1.loadBalancer.responseForwarding]

flushInterval = "1s"ServersTransport¶

ServersTransport allows to configure the transport between Traefik and your servers.

ServerName¶

Optional

serverName configure the server name that will be used for SNI.

## Dynamic configuration

http:

serversTransports:

mytransport:

serverName: "myhost"## Dynamic configuration

[http.serversTransports.mytransport]

serverName = "myhost"apiVersion: traefik.containo.us/v1alpha1

kind: ServersTransport

metadata:

name: mytransport

namespace: default

spec:

serverName: "test"Certificates¶

Optional

certificates is the list of certificates (as file paths, or data bytes)

that will be set as client certificates for mTLS.

## Dynamic configuration

http:

serversTransports:

mytransport:

certificates:

- certFile: foo.crt

keyFile: bar.crt## Dynamic configuration

[[http.serversTransports.mytransport.certificates]]

certFile = "foo.crt"

keyFile = "bar.crt"apiVersion: traefik.containo.us/v1alpha1

kind: ServersTransport

metadata:

name: mytransport

namespace: default

spec:

certificatesSecrets:

- mycert

---

apiVersion: v1

kind: Secret

metadata:

name: mycert

data:

tls.crt: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCi0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0=

tls.key: LS0tLS1CRUdJTiBQUklWQVRFIEtFWS0tLS0tCi0tLS0tRU5EIFBSSVZBVEUgS0VZLS0tLS0=insecureSkipVerify¶

Optional

insecureSkipVerify disables SSL certificate verification.

## Dynamic configuration

http:

serversTransports:

mytransport:

insecureSkipVerify: true## Dynamic configuration

[http.serversTransports.mytransport]

insecureSkipVerify = trueapiVersion: traefik.containo.us/v1alpha1

kind: ServersTransport

metadata:

name: mytransport

namespace: default

spec:

insecureSkipVerify: truerootCAs¶

Optional

rootCAs is the list of certificates (as file paths, or data bytes)

that will be set as Root Certificate Authorities when using a self-signed TLS certificate.

## Dynamic configuration

http:

serversTransports:

mytransport:

rootCAs:

- foo.crt

- bar.crt## Dynamic configuration

[http.serversTransports.mytransport]

rootCAs = ["foo.crt", "bar.crt"]apiVersion: traefik.containo.us/v1alpha1

kind: ServersTransport

metadata:

name: mytransport

namespace: default

spec:

rootCAsSecrets:

- myca

---

apiVersion: v1

kind: Secret

metadata:

name: myca

data:

tls.crt: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCi0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0=maxIdleConnsPerHost¶

Optional, Default=2

If non-zero, maxIdleConnsPerHost controls the maximum idle (keep-alive) connections to keep per-host.

## Dynamic configuration

http:

serversTransports:

mytransport:

maxIdleConnsPerHost: 7## Dynamic configuration

[http.serversTransports.mytransport]

maxIdleConnsPerHost = 7apiVersion: traefik.containo.us/v1alpha1

kind: ServersTransport

metadata:

name: mytransport

namespace: default

spec:

maxIdleConnsPerHost: 7forwardingTimeouts¶

forwardingTimeouts is about a number of timeouts relevant to when forwarding requests to the backend servers.

forwardingTimeouts.dialTimeout¶

Optional, Default=30s

dialTimeout is the maximum duration allowed for a connection to a backend server to be established.

Zero means no timeout.

## Dynamic configuration

http:

serversTransports:

mytransport:

forwardingTimeouts:

dialTimeout: "1s"## Dynamic configuration

[http.serversTransports.mytransport.forwardingTimeouts]

dialTimeout = "1s"apiVersion: traefik.containo.us/v1alpha1

kind: ServersTransport

metadata:

name: mytransport

namespace: default

spec:

forwardingTimeouts:

dialTimeout: "1s"forwardingTimeouts.responseHeaderTimeout¶

Optional, Default=0s

responseHeaderTimeout, if non-zero, specifies the amount of time to wait for a server's response headers

after fully writing the request (including its body, if any).

This time does not include the time to read the response body.

Zero means no timeout.

## Dynamic configuration

http:

serversTransports:

mytransport:

forwardingTimeouts:

responseHeaderTimeout: "1s"## Dynamic configuration

[http.serversTransports.mytransport.forwardingTimeouts]

responseHeaderTimeout = "1s"apiVersion: traefik.containo.us/v1alpha1

kind: ServersTransport

metadata:

name: mytransport

namespace: default

spec:

forwardingTimeouts:

responseHeaderTimeout: "1s"forwardingTimeouts.idleConnTimeout¶

Optional, Default=90s

idleConnTimeout, is the maximum amount of time an idle (keep-alive) connection

will remain idle before closing itself.

Zero means no limit.

## Dynamic configuration

http:

serversTransports:

mytransport:

forwardingTimeouts:

idleConnTimeout: "1s"## Dynamic configuration

[http.serversTransports.mytransport.forwardingTimeouts]

idleConnTimeout = "1s"apiVersion: traefik.containo.us/v1alpha1

kind: ServersTransport

metadata:

name: mytransport

namespace: default

spec:

forwardingTimeouts:

idleConnTimeout: "1s"Weighted Round Robin (service)¶

The WRR is able to load balance the requests between multiple services based on weights.

This strategy is only available to load balance between services and not between servers.

Supported Providers

This strategy can be defined currently with the File or IngressRoute providers.

## Dynamic configuration

http:

services:

app:

weighted:

services:

- name: appv1

weight: 3

- name: appv2

weight: 1

appv1:

loadBalancer:

servers:

- url: "http://private-ip-server-1/"

appv2:

loadBalancer:

servers:

- url: "http://private-ip-server-2/"## Dynamic configuration

[http.services]

[http.services.app]

[[http.services.app.weighted.services]]

name = "appv1"

weight = 3

[[http.services.app.weighted.services]]

name = "appv2"

weight = 1

[http.services.appv1]

[http.services.appv1.loadBalancer]

[[http.services.appv1.loadBalancer.servers]]

url = "http://private-ip-server-1/"

[http.services.appv2]

[http.services.appv2.loadBalancer]

[[http.services.appv2.loadBalancer.servers]]

url = "http://private-ip-server-2/"Mirroring (service)¶

The mirroring is able to mirror requests sent to a service to other services. Please note that by default the whole request is buffered in memory while it is being mirrored. See the maxBodySize option in the example below for how to modify this behaviour.

Supported Providers

This strategy can be defined currently with the File or IngressRoute providers.

## Dynamic configuration

http:

services:

mirrored-api:

mirroring:

service: appv1

# maxBodySize is the maximum size allowed for the body of the request.

# If the body is larger, the request is not mirrored.

# Default value is -1, which means unlimited size.

maxBodySize: 1024

mirrors:

- name: appv2

percent: 10

appv1:

loadBalancer:

servers:

- url: "http://private-ip-server-1/"

appv2:

loadBalancer:

servers:

- url: "http://private-ip-server-2/"## Dynamic configuration

[http.services]

[http.services.mirrored-api]

[http.services.mirrored-api.mirroring]

service = "appv1"

# maxBodySize is the maximum size in bytes allowed for the body of the request.

# If the body is larger, the request is not mirrored.

# Default value is -1, which means unlimited size.

maxBodySize = 1024

[[http.services.mirrored-api.mirroring.mirrors]]

name = "appv2"

percent = 10

[http.services.appv1]

[http.services.appv1.loadBalancer]

[[http.services.appv1.loadBalancer.servers]]

url = "http://private-ip-server-1/"

[http.services.appv2]

[http.services.appv2.loadBalancer]

[[http.services.appv2.loadBalancer.servers]]

url = "http://private-ip-server-2/"Configuring TCP Services¶

General¶

Each of the fields of the service section represents a kind of service.

Which means, that for each specified service, one of the fields, and only one,

has to be enabled to define what kind of service is created.

Currently, the two available kinds are LoadBalancer, and Weighted.

Servers Load Balancer¶

The servers load balancer is in charge of balancing the requests between the servers of the same service.

Declaring a Service with Two Servers -- Using the File Provider

## Dynamic configuration

tcp:

services:

my-service:

loadBalancer:

servers:

- address: "xx.xx.xx.xx:xx"

- address: "xx.xx.xx.xx:xx"## Dynamic configuration

[tcp.services]

[tcp.services.my-service.loadBalancer]

[[tcp.services.my-service.loadBalancer.servers]]

address = "xx.xx.xx.xx:xx"

[[tcp.services.my-service.loadBalancer.servers]]

address = "xx.xx.xx.xx:xx"Servers¶

Servers declare a single instance of your program.

The address option (IP:Port) point to a specific instance.

A Service with One Server -- Using the File Provider

## Dynamic configuration

tcp:

services:

my-service:

loadBalancer:

servers:

- address: "xx.xx.xx.xx:xx"## Dynamic configuration

[tcp.services]

[tcp.services.my-service.loadBalancer]

[[tcp.services.my-service.loadBalancer.servers]]

address = "xx.xx.xx.xx:xx"PROXY Protocol¶

Traefik supports PROXY Protocol version 1 and 2 on TCP Services.

It can be enabled by setting proxyProtocol on the load balancer.

Below are the available options for the PROXY protocol:

versionspecifies the version of the protocol to be used. Either1or2.

Version

Specifying a version is optional. By default the version 2 will be used.

A Service with Proxy Protocol v1 -- Using the File Provider

## Dynamic configuration

tcp:

services:

my-service:

loadBalancer:

proxyProtocol:

version: 1## Dynamic configuration

[tcp.services]

[tcp.services.my-service.loadBalancer]

[tcp.services.my-service.loadBalancer.proxyProtocol]

version = 1Termination Delay¶

As a proxy between a client and a server, it can happen that either side (e.g. client side) decides to terminate its writing capability on the connection (i.e. issuance of a FIN packet). The proxy needs to propagate that intent to the other side, and so when that happens, it also does the same on its connection with the other side (e.g. backend side).

However, if for some reason (bad implementation, or malicious intent) the other side does not eventually do the same as well, the connection would stay half-open, which would lock resources for however long.

To that end, as soon as the proxy enters this termination sequence, it sets a deadline on fully terminating the connections on both sides.

The termination delay controls that deadline. It is a duration in milliseconds, defaulting to 100. A negative value means an infinite deadline (i.e. the connection is never fully terminated by the proxy itself).

A Service with a termination delay -- Using the File Provider

## Dynamic configuration

tcp:

services:

my-service:

loadBalancer:

terminationDelay: 200## Dynamic configuration

[tcp.services]

[tcp.services.my-service.loadBalancer]

[[tcp.services.my-service.loadBalancer]]

terminationDelay = 200Weighted Round Robin¶

The Weighted Round Robin (alias WRR) load-balancer of services is in charge of balancing the requests between multiple services based on provided weights.

This strategy is only available to load balance between services and not between servers.

Supported Providers

This strategy can be defined currently with the File or IngressRoute providers.

## Dynamic configuration

tcp:

services:

app:

weighted:

services:

- name: appv1

weight: 3

- name: appv2

weight: 1

appv1:

loadBalancer:

servers:

- address: "xxx.xxx.xxx.xxx:8080"

appv2:

loadBalancer:

servers:

- address: "xxx.xxx.xxx.xxx:8080"## Dynamic configuration

[tcp.services]

[tcp.services.app]

[[tcp.services.app.weighted.services]]

name = "appv1"

weight = 3

[[tcp.services.app.weighted.services]]

name = "appv2"

weight = 1

[tcp.services.appv1]

[tcp.services.appv1.loadBalancer]

[[tcp.services.appv1.loadBalancer.servers]]

address = "private-ip-server-1:8080/"

[tcp.services.appv2]

[tcp.services.appv2.loadBalancer]

[[tcp.services.appv2.loadBalancer.servers]]

address = "private-ip-server-2:8080/"Configuring UDP Services¶

General¶

Each of the fields of the service section represents a kind of service.

Which means, that for each specified service, one of the fields, and only one,

has to be enabled to define what kind of service is created.

Currently, the two available kinds are LoadBalancer, and Weighted.

Servers Load Balancer¶

The servers load balancer is in charge of balancing the requests between the servers of the same service.

Declaring a Service with Two Servers -- Using the File Provider

## Dynamic configuration

udp:

services:

my-service:

loadBalancer:

servers:

- address: "xx.xx.xx.xx:xx"

- address: "xx.xx.xx.xx:xx"## Dynamic configuration

[udp.services]

[udp.services.my-service.loadBalancer]

[[udp.services.my-service.loadBalancer.servers]]

address = "xx.xx.xx.xx:xx"

[[udp.services.my-service.loadBalancer.servers]]

address = "xx.xx.xx.xx:xx"Servers¶

The Servers field defines all the servers that are part of this load-balancing group, i.e. each address (IP:Port) on which an instance of the service's program is deployed.

A Service with One Server -- Using the File Provider

## Dynamic configuration

udp:

services:

my-service:

loadBalancer:

servers:

- address: "xx.xx.xx.xx:xx"## Dynamic configuration

[udp.services]

[udp.services.my-service.loadBalancer]

[[udp.services.my-service.loadBalancer.servers]]

address = "xx.xx.xx.xx:xx"Weighted Round Robin¶

The Weighted Round Robin (alias WRR) load-balancer of services is in charge of balancing the requests between multiple services based on provided weights.

This strategy is only available to load balance between services and not between servers.

This strategy can only be defined with File.

## Dynamic configuration

udp:

services:

app:

weighted:

services:

- name: appv1

weight: 3

- name: appv2

weight: 1

appv1:

loadBalancer:

servers:

- address: "xxx.xxx.xxx.xxx:8080"

appv2:

loadBalancer:

servers:

- address: "xxx.xxx.xxx.xxx:8080"## Dynamic configuration

[udp.services]

[udp.services.app]

[[udp.services.app.weighted.services]]

name = "appv1"

weight = 3

[[udp.services.app.weighted.services]]

name = "appv2"

weight = 1

[udp.services.appv1]

[udp.services.appv1.loadBalancer]

[[udp.services.appv1.loadBalancer.servers]]

address = "private-ip-server-1:8080/"

[udp.services.appv2]

[udp.services.appv2.loadBalancer]

[[udp.services.appv2.loadBalancer.servers]]

address = "private-ip-server-2:8080/"